4.1. A Sampler of Panoramic Sky Surveys and Catalogs

Sky surveys, large and small, are already too numerous to cover extensively here, and more are produced steadily. In the Appendix, we provide a listing, undoubtedly incomplete, of a number of them, with the Web links that may be a good starting point for a further exploration. Here we describe some of the more popular panoramic (wide-field, single-pass) imaging surveys, largely for the illustrative purposes, sorted in wavelength regimes, and in a roughly chronological order.

However, our primary aim is to illustrate the remarkable richness and diversity of the field, and a dramatic growth in the data volumes, quality, and homogeneity. Surveys are indeed the backbone of contemporary astronomy.

Table 1 lists basic parameters for a number of popular surveys, at least as of this writing. It is meant as illustrative, rather than complete in any way. In the Appendix, we list a much larger number of the existing surveys and their websites, from which the equivalent information can be obtained. We also refrain from talking too much about the surveys that are still in the planning stages; the future will tell how good they will be.

4.1.1 Surveys and Catalogs in the Visible Regime

The POSS-II photographic survey plate scans obtained at the Space Telescope Science Institute (STScI), led to the Digitized Palomar Observatory Sky Survey (DPOSS; Djorgovski et al. 1994, 1997a, 1999, Weir et al. 1995a; http://www.astro.caltech.edu/~george/dposs), a digital survey of the entire Northern sky in three photographic bands, calibrated by CCD observations to SDSS-like gri. The plates are scanned with 1.0 arcsec pixels, in rasters of 23,040 square, with 16 bits per pixel, producing about 1 GB per plate, or about 3 TB of pixel data in total. They were processed independently at STScI for the purposes of constructing a new guide star catalog for the HST (Lasker et al. 2008), and at Caltech for the DPOSS project. Catalogs of all the detected objects on each plate were generated, down to the flux limit of the plates, which roughly corresponds to the equivalent limiting magnitude of mB ~ 22 mag. A specially developed software package, Sky Image Cataloging and Analysis Tool (SKICAT; Weir et al. 1995c) was used to process and analyze the data. Machine learning tools (decision trees and neural nets) were used for star-galaxy classification (Weir et al. 1995b, Odewahn et al. 2004), accurate to 90% down to ~ 1 mag above the detection limit. An extensive program of CCD calibrations was done at the Palomar 60-inch telescope (Gal et al. 2005). The resulting object catalogs were combined and stored in a relational database. The DPOSS catalogs contain ~ 50 million galaxies, and ~ 500 million stars, with over 100 attributes measured for each object. They generated a number of studies, e.g., a modern, objectively generated analog of the old Abell cluster catalog (Gal et al. 2009).

| Survey | Type | Duration | Bandpasses | Lim. flux | Area coverage | Nsources | Notes |

| DSS scans | Visible | 1950's-1990's | B (~ 450 nm) R (~ 650 nm) I (~ 800 nm) | 21-22 mag 20-21 mag 19-20 mag | Full sky | ~ 109 | Scans of plates from the POSS and ESO/SERC surveys |

| SDSS-I SDSS-II SDSS-III | Visible | 2000-2005 2005-2008 2009-2014 | u (~ 800 nm) g (~ 800 nm) r (~ 800 nm) i (~ 800 nm) z (~ 800 nm) | 22.0 mag 22.2 mag 22.2 mag 21.3 mag 20.5 mag | 14,500 deg2 | 4.7 × 108 | Numbers quoted for DR8 (2011). In addition, spectra of ~ 1.6 million objects |

| 2MASS | Near IR | 1997-2001 | J (~ 1.25 µm) H (~ 1.65 µm) Ks(~ 2.15 µm) | 15.8 mag 15.1 mag 14.3 mag | Full sky | 4.7 × 108 | |

| UKIDSS | Near IR | 2005-2012 | Y (~ 1.05 µm) J (~ 1.25 µm) H (~1.65 µm) K (~ 2.2 µm) | 20.5 mag 20.0 mag 18.8 mag 18.4 mag | 7,500 deg2 | ~ 109 | Estim. final numbers for the LAS; deeper surveys over smaller areas also done |

| IRAS | Mid/Far IR (space) | 1983-1986 | 12 µm 25 µm 60 µm 100 µm | 0.5 Jy 0.5 Jy 0.5 Jy 1.5 Jy | Full sky | 1.7 × 105 | |

| NVSS | Radio | 1993-1996 | 1.4 GHz | 2.5 mJy | 32,800 deg2 | 1.8 × 106 | Beam ~ 45 arcsec |

| FIRST | Radio | 1993-2004 | 1.4 GHz | 1 mJy | 10,000 deg2 | 8.2 × 105 | Beam ~ 5 arcsec |

| PMN | Radio | 1990 | 4.85 GHz | ~ 30 mJy | 16,400 deg2 | 1.3 × 104 | Combines several surveys |

| GALEX | UV (space) | 2003-2012 | 135 - 175 nm 175 - 275 nm | 20.5 mag AIS 23 mag MIS | AIS 29,000 MIS 3,900 deg2 | 6.5 × 107 1.3 × 107 | As of GR6 (2011); also some deeper data |

| Rosat | X-ray (space) | 1990-1999 | 0.04 - 2 keV | ~ 10-14 erg cm-2 s-1 | Full sky | 1.5 × 105 | Deeper, small area surveys also done |

| Fermi LAT |  -ray (space) -ray (space) |

2008-? | 20 MeV to 30 GeV | 4 × 10-9 erg cm-2 s-1 | Full sky | ~ 2 × 103 | LAT only; GRBM data in addition |

The United States Naval Observatory Astrometric Catalog (USNO-A2, and an improved version, USNO-B1, http://www.nofs.navy.mil/data/FchPix) were derived from the POSS-I, POSS-II and ESO/SERC Southern sky survey plate scans, done using the Precision Measuring Machine (PMM) built and operated by the United States Naval Observatory Flagstaff Station (Monet et al. 2003). They contain about a billion sources over the entire sky down to a limiting magnitude of mB ~ 20 mag, and they are now commonly used to derive astrometric solutions for optical and NIR images. A combined astrometric data set from several major modern catalogs is currently being served by the USNO's Naval Observatory Merged Astrometric Dataset (NOMAD; http://www.usno.navy.mil/USNO/astrometry/optical-IR-prod/nomad).

The Sloan Digital Sky Survey (SDSS; Gunn et al. 1998, 2006, York et al. 2000, Fukugita et al. 1996; http://sdss.org) is a large international astronomical collaboration focused on constructing the first CCD photometric survey high Galactic latitudes, mostly in the Northern sky. The survey and its extensions (SDSS-II and SDSS-III) eventually covered ~ 14,500 deg2, i.e., more than a third of the entire sky. A dedicated 2.5 m telescope at Apache Point, New Mexico, was specially designed to take wide field (3° × 3°) images using a mosaic of thirty 2048 × 2048 pixel CCDs, with 0.396 arcsec pixels, and with additional devices used for astrometric calibration. The imaging data were taken in the drift scanning mode along great circles on the sky. The imaging survey uses 5 passbands (u' g' r' i' z'), with limiting magnitudes of 22.0, 22.2, 22.2, 21.3, and 20.5 mag, in the u' g' r' i' z' bands respectively. The spectroscopic component of the survey is described below. The total raw data volume is several tens of TB, with an at least comparable amount of derived data products.

The initial survey (SDSS-I, 2000-2005) covered ~ 8,000 deg2. SDSS-II (2005-2008) consisted of Sloan Legacy Survey, that expanded the area coverage to ~ 8,400 deg2, and catalogued 230 million objects, the Sloan Extension for Galactic Understanding and Exploration (SEGUE) that obtained almost a quarter million spectra over ~ 3,500 deg2, largely for the purposes of studies of the Galactic structure, and the Sloan Supernova Survey which confirmed 500 Type Ia SNe. The latest survey extension, SDSS-III (2008-2014), is still ongoing at this time.

The data have been made available to the public through a series of well-documented releases, the last one (as of this writing), DR8, occurred in 2011. In all, by 2011, photometric measurements for ~ 470 million unique objects have been obtained, and ~ 1.6 million spectra.

More than perhaps any other survey, SDSS deserves the credit for transforming the culture of astronomy in regards to sky surveys: they are now regarded as fundamental data sources with a rich information content. SDSS enabled - and continues to do so - a vast range of science, not just by the members of the SDSS team (of which there are many), but also the rest of the astronomical community, spanning topics from asteroids to cosmology. Due to its public data releases, SDSS may be the most productive astronomical project so far.

The Panoramic Survey Telescope & Rapid Response System (Pan-STARRS; http://pan-starrs.ifa.hawaii.edu; Kaiser et al. 2002) is a wide-field panoramic survey developed at the University of Hawaii's Institute for Astronomy, and operated by an international consortium of institutions. It is envisioned as a set of four 1.8 m telescopes with a 3° field of view each, observing the same region of the sky simultaneously, each equipped with a ~ 1.4 Gigapixel CCD camera with 0.3 arcsec pixels. As of this writing, only one of the telescopes (PS1) is operational, at Haleakala Observatory on Maui, Hawaii; it started science operations in 2010. The survey may be transferred to the Mauna Kea Observatory, if additional telescopes are built. The CCD cameras, consisting of an array of 64, 600 × 600 pixel CCDs, incorporate a novel technology, Orthogonal Transfer CCDs, that can be used to compensate the atmospheric tip-tilt distortions (Tonry et al. 1997), although that feature has not yet been used for the regular data taking. PS1 can cover up to 6,000 deg2 per night and generate up to several TB of data per night, but not all images are saved, and the expected final output is estimated to be ~ 1 PB per year.

The primary goal of the PS1 is to survey ~ ¾ of the entire sky

(the "3 " survey) with 12

epochs in each of the 5 bands

(grizy). The coadded images should reach considerably deeper than

SDSS. A dozen key projects, some requiring additional observations, are

also under way. The survey also has a significant time-domain

component. The data access is restricted to the consortium members until

the completion of the "3

" survey) with 12

epochs in each of the 5 bands

(grizy). The coadded images should reach considerably deeper than

SDSS. A dozen key projects, some requiring additional observations, are

also under way. The survey also has a significant time-domain

component. The data access is restricted to the consortium members until

the completion of the "3 " survey.

" survey.

Its forthcoming Southern sky counterpart is SkyMapper (http://msowww.anu.edu.au/skymapper), developed by Brian Schmidt and collaborators at the Australian National University. It is undergoing the final commissioning steps as of this writing, and it will undoubtedly be a significant contributor when it starts full operations. The fully automated 1.35 m wide-angle telescope is located at Siding Spring Observatory, Australia. It has a ~ 270 Megapixel CCD mosaic camera that covers ~ 5.7 deg2 in a single exposure, with 0.5 arcsec pixels. SkyMapper will cover the entire southern sky 36 times in 6 filters (SDSS g' r' i' z', a Strömgren system-like u, and a unique narrow v band near 4000 Å). It will generate ~ 100 MB of data per second during every clear night, totaling about 500 Terabytes of data at the end of the survey. A distilled version of the survey data will be made publicly available. Its scientific goals include: discovering dwarf planets in the outer solar system, tracking asteroids, create a comprehensive catalog of the stars in our Galaxy, dark matter studies, and so on.

Another Southern sky facility is the Very Large Telescope (VLT)

Survey Telescope (VST;

http://www.eso.org/public/teles-instr/surveytelescopes/vst.html)

that is just starting operations as of this writing (2011). This 2.6 m

telescope covers a ~ 1 deg2 field, with a ~ 270 Megapixel CCD

mosaic consisting of 32, 2048 × 4096 pixel CCDs. The VST ATLAS

survey will cover 4,500 deg2 of the Southern sky in five

filters (ugriz) to depths comparable to those of the SDSS. A

deeper survey, KIDS, will reach ~ 2 - 3 mag deeper over 1,500

deg2 in ugri bands. An additional survey, VPHAS+, will

cover 1,800 deg2 along the Galactic plane in ugri and

H bands, to depths

comparable to the ATLAS survey. These and

other VST surveys will also be complemented by near-infrared data from

the VISTA survey, described below. They will provide an imaging base for

spectroscopic surveys by the VLT. Scientific goals include studies of

dark matter and dark energy, gravitational lensing, quasar searches,

structure of our Galaxy, etc.

bands, to depths

comparable to the ATLAS survey. These and

other VST surveys will also be complemented by near-infrared data from

the VISTA survey, described below. They will provide an imaging base for

spectroscopic surveys by the VLT. Scientific goals include studies of

dark matter and dark energy, gravitational lensing, quasar searches,

structure of our Galaxy, etc.

An ambitious new Dark Energy Survey (DES; http://www.darkenergysurvey.org) should start in 2012, using the purpose-built the Dark Energy Camera (DECam), on the Blanco 4 m telescope at CTIO. It is a large international collaboration, involving 23 institutions. DECam is a 570 Megapixel camera with 74 CCDs, with a 2.2 deg2 FOV. The primary goal is to catalog 5,000 deg2 in the Southern hemisphere, in grizy bands, in an area overlaps with several other surveys, for a maximum leveraging. It is expected to see over 300 million galaxies during the 525 nights, and find and measure ~ 3,000 SNe, and use several observational probes of dark energy in order to constrain its physical nature.

The GAIA mission (http://gaia.esa.int) is expected to be launched in 2012, expected to revolutionize astrometry and provide fundamental data for a better understanding of the structure of our Galaxy, distance scale, stellar evolution, and other topics. It will measure positions with a 20 microarcsec accuracy for about a billion stars (i.e., at the depth roughly matching the POSS surveys), from which parallaxes and proper motions will be derived. In addition, it will obtain low resolution spectra for many objects. It is expected to cover the sky ~ 70 times over a projected 5 year mission, and thus discover numerous variable and transient sources.

Many other modern surveys in the visible regime invariably have a strong synoptic (time domain) component, reflecting some of their scientific goals. We describe some of them below.

The Two Micron All-Sky Survey

(2MASS; 1997-2002;

http://www.ipac.caltech.edu/2mass;

Skrutskie et

al. 2006)

is a near-IR (J, H, and KS bands) all-sky

survey, done as a collaboration between the University of Massachusetts,

which constructed the observatory facilities and operated the survey,

and the Infrared Processing and Analysis Center (IPAC) at Caltech, which

is responsible for all data processing and archives. It utilized two

highly automated 1.3 m telescopes, one at Mt. Hopkins, AZ and one at

CTIO, Chile. Each telescope was equipped with a three-channel camera

with HgCdTe detectors, and was capable of observing the sky

simultaneously at J, H, and

KS with 2 arcsec pixels. It remains as the only

modern, ground-based sky survey that covered the entire sky. The survey

generated ~ 12 TB of imaging data, a point source catalog of ~ 300

million objects, with a

10- limit of

KS <

14.3 mag (Vega zero point), and a catalog of ~ 500,000 extended

sources. All of the data are publicly available via a web interface to a

powerful database system at IPAC.

limit of

KS <

14.3 mag (Vega zero point), and a catalog of ~ 500,000 extended

sources. All of the data are publicly available via a web interface to a

powerful database system at IPAC.

The United Kingdom Infrared Telescope (UKIRT) Infrared Deep Sky Survey (UKIDSS, 20052012, Lawrence et al. 2007, http://www.ukidss.org) is a NIR sky survey. Its wide-field component covers about 7,500 deg2 of the Northern sky, extending over both high and low Galactic latitudes, in the YJHK bands down to the limiting magnitude of K = 18.3 mag (Vega zero point). UKIDSS is made up of five surveys and includes two deep extra-Galactic elements, one covering 35 deg2 down to K = 21 mag, and the other reaching K = 23 mag over 0.77 deg2. The survey instrument is WFCAM on the UK Infrared Telescope (UKIRT) in Hawaii. It has four 2048x2048 IR arrays; the pixel scale of 0.4 arcsec gives an exposed solid angle of 0.21 deg2. Four of the principal scientific goals of UKIDSS are: finding the coolest and the nearest brown dwarfs, studies high-redshift dusty starburst galaxies, elliptical galaxies and galaxy clusters at redshifts 1 < z < 2, and the highest-redshift quasars, at z ~ 7. The survey succeeded on all counts. The data were made available to the entire ESO community immediately they are entered into the archive, followed by a world-wide release 18 months later.

The Visible and Infrared Survey Telescope for Astronomy (VISTA; http://www.vista.ac.uk) is a 4.1 m telescope at the ESO's Paranal Observatory. Its 67 Megapixel IR camera uses 16 IR detectors, covers a FOV ~ 1.65° with 0.34 arcsec pixels, in ZYJHKsbands, and a 1.18 µm narrow band. It will conduct a number of distinct surveys, the widest being the VISTA Hemisphere Survey (VHS), covering the entire Southern sky down to the limiting magnitudes ~ 20 - 21 mag in YJHKs bands, and the deepest being the Ultra-VISTA, that will cover a 0.73 deg2 field down to the limiting magnitudes ~ 26 mag in the YJHKs bands, and the 1.18 µm narrow band. VISTA is expected to produce data rates exceeding 100 TB/year, with the publicly available data distributed through the ESO archives.

A modern, space-based all-sky survey is Wide-field Infrared Survey

Explorer (WISE; 20092011;

Wright et al. 2010;

http://wise.ssl.berkeley.edu).

It mapped the sky at 3.4, 4.6, 12, and 22

µm bands, with an angular resolution of 6.1, 6.4, 6.5, and

12.0 arcsec in the four bands, the number of passes depending on the

coordinates. It achieved

5- point source

sensitivities of about

0.08, 0.11, 1 and 6 mJy, respectively, in unconfused regions. It

detected over 250 million objects, with over 2 billion individual

measurements, ranging from near-Earth asteroids, to brown dwarfs,

ultraluminous starbursts, and other interesting types of objects. Given

its uniform, all-sky coverage, this survey is likely to play an

important role in the future. The data are now publicly available.

point source

sensitivities of about

0.08, 0.11, 1 and 6 mJy, respectively, in unconfused regions. It

detected over 250 million objects, with over 2 billion individual

measurements, ranging from near-Earth asteroids, to brown dwarfs,

ultraluminous starbursts, and other interesting types of objects. Given

its uniform, all-sky coverage, this survey is likely to play an

important role in the future. The data are now publicly available.

Two examples of modern, space-based IR surveys over the limited areas include the Spitzer Wide-area InfraRed Extragalactic survey (SWIRE; http://swire.ipac.caltech.edu; Lonsdale et al. 2003), that covered 50 deg2 in 7 mid/far-IR bandpasses, ranging from 3.6 µm to 160 µm, and the Spitzer Galactic Legacy Infrared Mid-Plane Survey Extraordinaire (GLIMPSE; http://www.astro.wisc.edu/sirtf/), that covered ~ 240 deg2 of the Galactic plane in 4 mid-IR bandpasses, ranging from 3.6 µm to 8.0 µm. Both were followed up at a range of other wavelengths, and provided new insights into obscured star formation in the universe.

4.1.3. Surveys and Catalogs in the Radio

The Parkes Radio Sources (PKS, http://archive.eso.org/starcat/astrocat/pks.html) is a radio catalog compiled from major radio surveys taken with the Parkes 64 m radio telescope at 408 MHz and 2700 MHz over a period of almost 20 years. It consists of 8,264 objects covering all the sky south of Dec = +27° except for the Galactic Plane and Magellanic Clouds. Discrete sources were selected with flux densities in excess of ~ 50 mJy. It has been since been supplemented (PKSCAT90) with improved positions, optical identifications and redshifts as well as flux densities at other frequencies to give a frequency coverage of 80 MHz - 22 GHz.

Numerous other continnum surveys have been performed. An example is Parkes-MIT-NRAO (PMN, http://www.parkes.atnf.csiro.au/observing/databases/pmn/pmn.html) radio continuum surveys were made with the NRAO 4.85 GHz seven-beam receiver on the Parkes telescope during June and November 1990. Maps covering four declination bands were constructed from the survey scans with the total survey coverage over -87.5° < Dec < +10°. A catalog of 50,814 discrete sources with angular sizes approximately less than 15 arcmin, and a flux density greater than about 25 mJy (the flux limit varies for each band, but is typically this value) was derived from these maps. The positional accuracy is close to 10 arcsec in each coordinate. The 4.85 GHz weighted source counts between 30 mJy and 10 Jy agree well with previous results.

The National Radio Astronomical Observatory (NRAO), Very Large Array (VLA) Sky Survey (NVSS; Condon et al. 1998; http://www.cv.nrao.edu/nvss) is a publicly available, radio continuum survey at 1.4 GHz, covering the sky north of Dec = -40° declination, i.e., ~ 32,800 deg2. The principal NVSS data products are a set of 2326 continuum map data cubes, each covering an area of 4° × 4° with three planes containing the Stokes I, Q, and U images, and a catalog of discrete sources in them. Every large image was constructed from more than one hundred of the smaller, original snapshot images (over 200,000 of them in total). The survey catalog contains over 1.8 millions discrete sources with total intensity and linear polarization image measurements (Stokes I, Q, and U), with a resolution of 45 arcsec, and a completeness limit of about 2.5 mJy. The NVSS survey was performed as a community service, with the principal data products being released to the public by anonymous FTP as soon as they were produced and verified.

The Faint Images of the Radio Sky at Twenty-cm (FIRST; http://sundog.stsci.edu, Becker et al. 1995) is publicly available, radio snapshot survey performed at the NRAO VLA facility in a configuration that provides higher spatial resolution than the NVSS, at the expense of a smaller survey footprint. It covers ~ 10,000 deg2 of the North and South Galactic Caps with a ~ 5 arcsec resolution. A final atlas of radio image maps with 1.8 arcsec pixels is produced by coadding the twelve images adjacent to each pointing center. The survey catalog contains around one million sources with a resolution of better than 1 arcsec. A source catalog including peak and integrated flux densities and sizes derived from fitting a two-dimensional Gaussian to each source is generated from the atlas. Approximately 15% of the sources have optical counterparts at the limit of the POSS-I plates; unambiguous optical identifications (< 5% false detection rates) are achievable to V ~ 24 mag. The survey area has been chosen to coincide with that of the SDSS. At the magnitude limit of the SDSS, approximately 50% of the FIRST sources have optical counterparts.

Two examples of modern H I 21 cm line surveys are the HI Parkes All Sky Survey (HIPASS; http://aus-vo.org/projects/hipass, http://www.atnf.csiro.au/research/multibeam/release/) and the Arecibo Legacy Fast ALFA survey (ALFALFA; http://egg.astro.cornell.edu; Giovanelli et al. 2005). These surveys cover the redshifts up to z ~ 0.05 - 0.06, detecing tens of thousands H I selected galaxies, high-velocity clouds, etc.

The new generation of ground-based radio sky surveys is intrinsically synoptic in nature; we discuss some of them below. A number of experiments are also addressing the epoch of reionization. The currently ongoing Planck mission (http://www.rssd.esa.int/Planck), in addition to its primary scientific goals of CMB-based cosmology, will also represent an excellent radio survey at high frequencies.

4.1.4. Surveys at Higher Energies

Numerous space missions have surveyed the sky at wavelengths ranging

from UV to

-rays.

Some of the more modern, panoramic ones include:

-rays.

Some of the more modern, panoramic ones include:

Galaxy Evolution Explorer (GALEX;

Martin et al. 2005;

http://www.galex.caltech.edu),

launched in 2003, is the first (and so

far the only) nearly-all-sky survey UV mission. Data are obtained in two

bands (1350-1750 and 1750-2750 Å) using microchannel plate

detectors that provide time-resolved imaging. The All-sky Imaging Survey

(AIS; it excludes some fields containing bright stars) reaches the depth

comparable to the POSS surveys (mAB

20.5 mag); a medium

depth survey covers ~ 3,900 deg2 down to mAB

20.5 mag); a medium

depth survey covers ~ 3,900 deg2 down to mAB

23 mag, and a deep

imaging survey covers ~350 deg2 down to mAB

23 mag, and a deep

imaging survey covers ~350 deg2 down to mAB

25 mag, In addition, a

survey of several hundred nearby galaxies and a low-resolution (R =

100-200) slitless grism spectroscopic survey was performed over a

limited area. The data are released in roughly yearly intervals, with

the final one (GR7) expected in 2012.

25 mag, In addition, a

survey of several hundred nearby galaxies and a low-resolution (R =

100-200) slitless grism spectroscopic survey was performed over a

limited area. The data are released in roughly yearly intervals, with

the final one (GR7) expected in 2012.

ROSAT (Röntgensatellit; 1990-1999; http://www.dlr.de/dlr/en/desktopdefault.aspx/tabid-10424/) was a German Aerospace Center-led satellite X-ray telescope, with instruments built by Germany, UK and US. It carried an imaging X-ray Telescope (XRT) with three focal plane instruments: two Position Sensitive Proportional Counters (PSPC), a High Resolution Imager (HRI), and a wide field camera (WFC) extreme ultraviolet telescope co-aligned with the XRT that covered the wave band between and 6 angstroms (0.042 to 0.21 keV). The X-ray mirror assembly was a grazing incidence four-fold nested Wolter I type telescope with an 84 cm diameter aperture and 240 cm focal length. The angular resolution was less than 5 arcsec at half energy width. The XRT assembly was sensitive to X-rays between 0.1 to 2 keV.

The ROSAT All Sky Survey (Voges et al. 1999) was publicly released in 2000, and it is currently available from the MPE archive and the HEASARC archive at GSFC. It consists of 1378 distinct fields, with the bulk of all sky survey obtained by PSPC-C in scanning mode. The telescope detected more than 150,000 X-ray sources, ~ 20 times more than were previously known. The survey enabled a detailed morphology of supernova remnants and clusters of galaxies; detection of shadowing of diffuse X-ray emission by molecular clouds; detection of isolated neutron stars; discovery of X-ray emission from comets; and many other results.

4.2. Synoptic Sky Surveys and Exploration of the Time Domain

A systematic exploration of the time domain in astronomy is now one of the most exciting and rapidly growing areas of astrophysics, and a vibrant new observational frontier (Paczynski 2000). A number of important astrophysical phenomena can be discovered and studied only in the time domain, ranging from exploration of the Solar System to cosmology and extreme relativistic phenomena. In addition to the studies of known, interesting time domain phenomena, e.g., supernovae and other types of cosmic explosions, there is a real and exciting possibility of discovery of new types of objects and phenomena. As already noted, opening new domains of the observable parameter space often leads to new and unexpected discoveries.

The field has been fueled by the advent of the new generation of digital synoptic sky surveys, which cover the sky many times, as well as the ability to respond rapidly to transient events using robotic telescopes. This new growth area of astrophysics has been enabled by information technology, continuing evolution from large panoramic digital sky surveys, to panoramic digital cinematography of the sky, opening the great new discovery space (Djorgovski et al. 2012).

Numerous surveys, studies, and experiments have been conducted in this arena, are in progress now, or are in planning stages, indicating the growing interest in time-domain astronomy, leading to the Large Synoptic Survey Telescope (LSST; Tyson et al. 2006, Ivezic et al. 2008). Today's surveys are opening the time domain frontier, yielding exciting results, and can be used to refine the scientific and methodological strategies for the future.

The key to progress in this emerging arena of astrophysics is the availability of substantial event data streams generated by panoramic digital synoptic sky surveys, coupled with a rapid follow-up of potentially interesting events (photometric, spectroscopic, and multi-wavelength). This, in turn, requires a rapid, real-time processing of massive sky survey data streams, with event detection, filtering, characterization, and rapid dissemination to the astronomical community. Over the past few years, we have been developing both synoptic sky survey data streams, and cyber-infrastructure needed for the rapid distribution and characterization of transient events and phenomena.

As in the previous subsection, here we describe some of the recent and ongoing synoptic sky surveys. This list is meant as illustrative, rather than complete, and the interested reader can follow additional links listed in the Appendix.

4.2.1. General Synoptic Surveys in the Visible Regime

Many current wide-field transient surveys are targeted toward the discovery and characterization of GRB afterglows. One example is he Robotic Optical Transient Search Experiment (ROTSEIII; Akerlof et al. 2003; http://www.rotse.net), uses 4 telescopes with a FOV = 3.4 deg2 to survey 1260 deg2 to R ~ 17.5 mag (e.g., Rykoff et al. 2005; Yost et al. 2007). ROTSE searches for astrophysical optical transients on time scales of a fraction of a second to a few hours. While the primary incentive of this experiment has been to find the GRB afterglows, additional variability studies have been enabled, e.g., searches for RR Lyrae.

The Palomar-Quest (PQ; Djorgovski et al. 2008; http://palquest.org) survey was a collaborative project between groups at Yale University and Caltech, with an extended network of collaborations with other groups world-wide. The data were obtained at the Palomar Observatory's Samuel Oschin telescope (the 48-inch Schmidt) using the QUEST-2, 112-CCD, 161 Mpix camera (Baltay et al. 2007). Approx. 45% of the telescope time was used for the PQ survey, with the rest being used by the NEAT survey for near-Earth asteroids, and miscellaneous other projects. The survey started in the late summer of 2003, and ended in September 2008.

In the first phase (2003-2006), data were obtained in the drift scan

mode in 4.6° wide strips of a constant Dec, in the range -25°

< Dec < +25°, excluding the Galactic plane. The total area

coverage is ~ 15,000 deg2, with multiple passes, typically 5

- 10, but up to 25, with time baselines ranging from hours to

years. Typical area coverage rate was up to ~ 500 deg2/night

in 4 filters. The raw data rate was on average ~ 70 GB per clear night.

About 25 TB of usable data have been collected in the drift scan

mode. Two filter sets were used, Johnson UBRI and SDSS

griz. Effective exposures are ~ 150 /

(cos  ) sec per pass,

with limiting magnitudes are r ~ 21.5, i ~ 20.5, z

~ 19.5, R ~ 22, and I ~ 21 mag, depending on the seeing,

lunar phase, etc. Photometric calibrations were done by matching to the

SDSS. In the second phase of the survey (2007-2008), data were obtained

mostly in the traditional point-and-track mode, in a single, wide-band

red filter (RG610). The coverage and the cadence were largely optimized

for the nearby supernova search, in collaboration with the LBNL NSNF

group, and a search for dwarf planets, in collaboration with M. Brown at

Caltech.

) sec per pass,

with limiting magnitudes are r ~ 21.5, i ~ 20.5, z

~ 19.5, R ~ 22, and I ~ 21 mag, depending on the seeing,

lunar phase, etc. Photometric calibrations were done by matching to the

SDSS. In the second phase of the survey (2007-2008), data were obtained

mostly in the traditional point-and-track mode, in a single, wide-band

red filter (RG610). The coverage and the cadence were largely optimized

for the nearby supernova search, in collaboration with the LBNL NSNF

group, and a search for dwarf planets, in collaboration with M. Brown at

Caltech.

Data were processed with several different pipelines, optimized for different scientific goals: the Yale pipeline (Andrews et al. 2007), designed for a search for gravitationally lensed quasars; the Caltech real-time pipeline, used for real-time detections of transient events, and the LBNL NSNF pipeline, based on image subtraction and designed for detection of nearby SNe. The data are being publicly released. Coadded images (the DeepSky project, Nugent et al., in prep.; http://supernova.lbl.gov/~nugent/deepsky.html) reach to R ~ 23 mag over ~ 20,000 deg2.

The Nearby Supernova Factory (NSNF; http://snfactory.lbl.gov; Aldering et al. 2002), operated by the Lawrence Berkeley National Laboratory (LBNL) searches for type Ia SNe in the redshift range 0.03 < z < 0.08, in order to establish the low-redshift anchor of the SN Ia Hubble diagram. This experiment ran from 2003 to 2008 using data obtained by the NEAT and PQ surveys, resulting in several hundred spectroscopically confirmed SNe.

The Palomar Transient Factory (PTF; http://www.astro.caltech.edu/ptf; Rau et al. 2009, Law et al. 2009) operates in the way that is very similar to the PQ survey, on the same telescope, but with a much better camera that was previously used on the CFHT. The data are taken in a point-and-stare mode, with 2 exposures per field in a given night, mostly in the broad R and G bands. PTF reaches a comparable depth to its predecessor, PQ, and covers a few hundred deg2/night. The overall area coverage is ~ ½ of the entire sky. The survey mostly uses a cadence optimized for a SN discovery. The principal data processing pipeline is an updated version of the LBNL NSNF image subtraction pipeline. The survey has been very productive, but the data are proprietary to the PTF consortium, at least as of this writing.

The Catalina Real-Time Transient Survey (CRTS; Drake et al. 2009, 2012; Djorgovski et al. 2011, Mahabal et al. 2011; http://crts.caltech.edu) leverages existing synoptic telescopes and image data resources from the Catalina Sky Survey (CSS) for near-Earth objects and potential planetary hazard asteroids (NEO/PHA), conducted by Edward Beshore, Steve Larson, and their collaborators at the Univ. of Arizona. The Solar System objects remain the domain of the CSS survey, while CRTS aims to detect astrophysical transient and variable objects, using the same data stream. Real-time processing, characterization, and distribution of these events, as well as follow-up observations of selected events constitute the CRTS.

CRTS utilizes three wide-field telescopes: the 0.68-m Schmidt at Catalina Station, AZ (CSS), the 0.5-m Uppsala Schmidt (Siding Spring Survey, SSS) at Siding Spring Observatory, NSW, Australia, and the Mt. Lemmon Survey (MLS), a 1.5-m reflector located on Mt. Lemmon, AZ. Each telescope employs a camera with a single, cooled, 4096 × 4096 pixel, back-illuminated, unfiltered CCD. They are operated for 23 nights per lunation, centered on new moon. Most of the observable sky is covered up to 4 times per lunation. The total area coverage is ~ 30,000 deg2, as it excludes the Galactic plane within |b| < 10° - 15°. In a given night, 4 images of the same field are taken, separated by ~ 10 min, for a total time baseline of ~30 min between first and last images. The combined data streams cover up to 2,500 deg2/night to a limiting magnitude of V ~ 19 - 20 mag, and up to 275 deg2/night to V ~ 21.5 mag. On time scales of a few weeks, the combined survey covers most of the available sky from Arizona and Australia, with a few sets of exposures.

Optical transients (OTs) and highly variable objects (HVOs) are detected as sources that display significant changes in brightness, as compared to the baseline comparison images and catalogs with significantly fainter limiting magnitudes; these are obtaining by meadian stacking of > 20 previous observations, and reach ~ 2 mag deeper. The search is performed in the catalog domain, but an image subtraction pipeline is also being developed. Data cover time baselines from 10 min to several years.

CRTS practices an open data philosophy: detected transients are published electronically in real time, using a variety of mechanisms, so that they can be followed up by anyone. Archival light curves for ~ 500 million objects, and all of the images are also being made public.

The All Sky Automated Survey (ASAS; http://www.astrouw.edu.pl/asas/; Pojmanski 1997) covers the entire sky using a set of small telescopes (telephoto lenses) at Las Campanas Observatory in Chile, and Haleakala, Maui, in V and I bands. The limiting magnitude are V ~ 14 mag and I ~ 13 mag, with ~ 14 arcsec/pixel sampling . The ASAS-3 Photometric V-band Catalogue contains over 15 million light curves; a derived product is the on-line ASAS Catalog of Variable Stars (ACVS), with ~ 50 thousand selected objects.

Supernova (SN) searches have been providing science drivers for a number of surveys. We recall that the original Zwicky survey from Palomar was motivated by the need to find more SNe for the follow-up studies. In addition to those described in Sec. 4.2.1, some of the dedicated SN surveys include:

The Calan/Tololo Supernova Search (Hamuy et al. 1993) operated from 1989 to 1993, and provided some of the key foundations for the subsequent use of type Ia SNe as standard candle, which eventually led to the evidence for dark energy.

The Supernova Cosmology Project (SCP, Perlmutter et al. 1998, 1999) was designed to measure the expansion of the universe using high redshift type-Ia SNe as standard candles. Simultaneously, the High-z Supernova Team (Filippenko & Riess 1998) was engaged in the same pursuit. While the initial SCP results were inconclusive (Perlmutter et al. 1997), in 1998 both experiments discovered that the expansions of the universe was accelerating (Perlmutter et al. 1998, Riess et al. 1998), thus providing a key piece of evidence for the existence of the dark energy (DE). These discoveries are documented and discussed very extensively in the literature.

The Equation of State: SupErNovae trace Cosmic Expansion project (ESSENCE; http://www.ctio.noao.edu/essence) was a SN survey optimized to constrain the equation of state (EoS) of the DE, using type Ia SNe at redshifts 0.2 < z < 0.8. The goal of the project was to determine the value of the EoS parameter w to within 10%. The survey used 30 half-nights per year with the CTIO Blanco 4 m telescope and the MOSAIC camera between 2003 and 2007 and discovered 228 type-Ia SNe. Observations were made in the VRI passbands with a cadence of a few days. SNe were discovered using an image subtraction pipeline, with candidates inspected and ranked for follow-up by eye.

Similarly, the Supernova Legacy Survey (SNLS; http://www.cfht.hawaii.edu/SNLS) was designed to precisely measure several hundred type Ia SNe at redshifts 0.3 < z < 1 or so (Astier et al. 2006). Imaging was done at the Canada-France-Hawaii Telescope (CFHT) with the Megaprime 340 Megapixel camera, providing a 1 deg2 FOV, in the course of 450 nights, between 2003 and 2008. About 1,000 SNe were discovered and followed up. Spectroscopic follow-up was dome using the 8-10 m class telescopes, resulting in ~ 500 confirmed SNe.

The Lick Observatory Supernova Search (LOSS; Filippenko et al. 2001; http://astro.berkeley.edu/bait/public_html/kait.html) is and ongoing search for low-z (z < 0.1) SNe that began in 1998. The experiment makes use of the 0.76 m Katzman Automatic Imaging Telescope (KAIT). Observations are taken with a 500 × 500 pixel unfiltered CCD, with an FoV of 6.7 × 6.7 arcmin2, reaching R ~ 19 mag in 25 seconds (Li et al. 1999). As of 2011, the survey made 2.3 million observations, and discovered a total of 874 SNe by repeatedly monitoring ~ 15,000 large nearby galaxies (Leaman et al. 2011). The project is designed to tie high-z SNe to their more easily observed local counterparts, and also to rapidly responded to Gamma-Ray Burst (GRB) triggers and monitor their light curves.

The SDSS-II Supernova Search was designed to detect type Ia SNe at intermediate redshifts

(0.05 < z < 0.4). This experiment ran 3-month campaigns from September to November during 2005 to 2007, by doing repeated scans of the SDSS Stripe 82 (Sako et al. 2008). Detection were made using image subtraction, and candidate type-Ia SNe were selected using the SDSS 5bandpass imaging. The experiment resulted in the discovery and spectroscopic confirmation of ~ 500 type Ia SNe, and 80 core-collapse SNe. Although the survey was designed to discover SNe, the data have been made public, enabling the study of numerous types of variables objects including variables star and AGN (Sesar et al. 2007; Bhatti et al. 2010).

Many other surveys and experiments have contributed to this vibrant field. Many amateur astronomers have also contributed; particularly noteworthy is the Puckett Observatory World Supernova Search (http://www.cometwatch.com/search.html) that involves several telescopes, and B. Monard's Supernova search.

4.2.3. Synoptic Surveys for Minor Bodies in the Solar System

The discovery that NEO events were potentially hazardous to human life motivated programs to discover the extent and nature of these objects, in particular the largest ones which could yield a significant destruction, the Potentially Hazardous Asteroids (PHAs). A number of NEO surveys began working with NASA in order to fulfill the congressional mandate to discover all NEOs larger than 1 km in diameter. The number of NEOs discovered each year continues to increase, as surveys increase in sensitivity, with the current rate of discovery of ~ 1,000 NEOs per year.

The search for NEOs (Near Earth Objects) with CCDs largely began with the discovery of the asteroid 1989 UP by the Spacewatch survey (http://spacewatch.lpl.arizona.edu). They also made the first automated NEO detection, of the asteroid 1990 SS. The survey uses the Steward Observatory 0.9 m telescope, and a camera covering 2.9 deg2. A 1.8 m telescope with a FOV or 0.8 deg2 was commissioned in 2001. Until 1997, Spacewatch was the major discoverer of NEOs, in addition to a number of Trans-Neptunian objects (TNO).

The Near-Earth Asteroid Tracking (NEAT; http://neat.jpl.nasa.gov) began in 1996 and ran until 2006. Initially, the survey used a three-CCD camera mounted on the Samuel Oschin 48-inch Schmidt telescope on Palomar Mountain, until it was replaced by the PQ survey's 160 Megapixel camera in 2003. In addition, in 2000 the project began using data from the Maui Space Surveillance Site 1.2 m telescope. A considerable number of NEOs was found by this survey. The work was combined with Mike Brown's search for dwarf planets, and resulted in a number of such discoveries, also causing the demotion of Pluto to a dwarf planet status. The data were also used by the PQ survey team for a SN search.

The Lincoln Near-Earth Asteroid Research survey, conducted by the MIT Lincoln Laboratory (LINEAR; http://www.ll.mit.edu/mission/space/linear) began in 1997, using four 1 m telescopes at the White Sands Missile Range in New Mexico (Viggh et al. 1997). The survey has discovered ~ 2,400 NEOs to date, among 230,000 other asteroids and comet discoveries.

The Lowell Observatory Near-Earth-Object Search (LONEOS; http://asteroid.lowell.edu/asteroid/loneos/loneos.html) ran from 1993 to 2008. The survey used a 0.6 m Schmidt telescope with an 8.3 deg FOV, located near Flagstaff, Arizona. It discovered 289 NEOs.

The Catalina Sky Survey (CSS; http://www.lpl.arizona.edu/css) began in 1999 and was extensively upgraded in 2003. It uses the telescopes and produces the data stream that is also used by the CRTS survey, described above. It became the most successful NEO discovery survey in 2004, and it has so far discovered ~ 70% of all known NEOs. On 6 October 2008 a small NEO, 2008 TC3, was the discovered by their 1.5 m telescope. The object was predicted to impact the Earth within 20 hours of discovery. The asteroid disintegrated in the upper atmosphere over the Sudan desert; 600 fragments were recovered on the ground, amounting to "an extremely low cost sample return mission". This was the first time that an asteroid impact on Earth has been accurately predicted.

The Pan-STARRS survey, discussed above, also has a significant NEO search component. Another interesting concept was proposed by Tonry (2011).

Recently, the NEOWISE program (a part of the WISE mission) discovered 130 NEOs, among a much larger number of asteroids.

One major lesson of the PQ survey was that a joint asteroid/transient analysis is necessary, since slowly moving asteroids represented a major "contaminant" in a search for astrophysical transients. A major lesson of the Catalina surveys is that the same data streams can be shared very effectively between the NEO and transient surveys, thus greatly increasing the utility of the shared data.

The proposition of detecting and measuring dark matter in in the form of Massive Compact Halo Objects (MACHOs) using gravitational microlensing was first proposed by Paczynski (1986). The advent of large format CCDs made the measurements of millions of stars required to detect gravitation lensing a possibility in the crowded stellar fields toward the Galactic Bulge and the Magellanic Clouds. Three surveys began searching for gravitation microlensing and the MACHO and EROS (Exp'erience pour la Recherche d'Objets Sombres) groups simultaneously announced the discovery of events toward the LMC in 1993 (Alcock et al. 1993; Aubourg et al. 1993). Meanwhile, the Optical Gravitational Lensing Experiment (OGLE) collaboration searched and found the first microlensing toward the Galactic bulge (Udalski et al. 1993). All three surveys continued to monitor ~ 100 deg2 in fields toward the Galactic Bulge, and discovered hundreds of microlensing events. A similar area was covered toward the Large Magellanic Cloud, and a dozen events were discovered. This result limited the contribution of MACHO to halo dark matter to 20% (Alcock et al. 2000).

It was also predicted that the signal of planets could be detected during microlensing events (Mao and Paczynski 1991). Searches for such events were undertaken by the Global Microlensing Alert Network (GMAN) and PLANET (Probing Lensing Anomalies NETwork) collaborations beginning in 1995. The first detection of planetary lensing was in 2003, when the microlensing event OGLE-2003-BLG-235 was found to harbor a Jovian mass planet (Bond et al. 2004). Udalski et al. (2002) used the OGLE-III camera and telescope to perform a dedicated search for transits and discovered the first large sample of transiting planets.

Additional planetary microlensing event surveys such as Microlensing Follow-Up Network (MicroFun) and RoboNet-I,II (Mottram & Fraser 2008, Tsapras et al. 2009) continue to find planets by following microlensing events discovered by OGLE-IV and MOA (Microlensing Observations in Astrophysics; Yock et al. 2000) surveys, which discover hundreds of microlensing events toward Galactic Bulge each year. Additional searches to further quantify the amount of matter in MACHOs have been undertaken in microlensing have been carried out by AGAPE (Ansari et al. 1997) toward M31 and the LMC by the SuperMACHO project (http://www.ctio.noao.edu/supermacho/lightechos/SM/sm.html).

In addition to the microlensing events, the surveys revealed the presence of tens of thousands of variables stars and others sources, as also predicted by Paczynski (1986). The data collected by these surveys represent a gold mine of information on variable stars of all kinds.

There has been a considerable interest recently in the exploration of the time domain in the radio regime. Ofek et al. (2011) reviewed the transient survey literature in the radio regime. The new generation of radio surveys and facilities is enabled largely by the advances in the radio electronics (themselves driven by the mobile computing and cell phone demands), as well as the ICT. These are reflected in better receivers and correlators, among other elements, and since much of this technology is off the shelf, the relatively low cost of individual units enables production of large new radio facilities that collect vast quantities of data. Some of them include:

The Allen Telescope Array (ATA; http://www.seti.org/ata) consists of 42 6-m dishes covering a frequency range of 0.5 - 11 GHz. It has a maximum baseline of 300m and a field-of-view of 2.5 deg at 1.4 GHz. A number of surveys have been conducted with the ATA. The Fly's Eye Transient search (Siemion et al. 2012) was a 500-hour survey over 198 square degrees at 209 MHz with all dishes pointing in different directions, looking for powerful millisecond timescale transients. The ATA Twenty-centimeter Survey (ATATS; Croft et al. 2010, 2011) was a survey at 1.4 GHz constructed from 12 epoch visits over an area of ~ 700 deg2 for rare bright transients and to prove the wide-field capabilities of the ATA. Its catalog contains 4984 sources above 20 mJy (> 90% complete to ~ 40 mJy) with positional accuracies better than 20 arcsec and is comparable to the NVSS. Finally the Pi GHz Sky Survey (PiGSS; Bower et al. 2010, 2011) is a 3.1 GHz radio continuum survey of ~ 250,000 radio sources in the 10,000 deg2 region of sky with b > 30° down to ~ 1 mJy, with each source being observed multiple times. A subregion of ~ 11 deg2 will also be repeatedly observed to characterize variability on timescales of days to years.

The Low Frequency Radio Array (LOFAR; http://www.lofar.org/) comprises 48 stations, each consisting of two types of dipole antenna: 48/96 LBA covering a frequency range of 10 - 90 MHz and 48/96 4x4 HBA tiles covering 110 - 240 MHz. There is a dense core of 6 stations, 34 more distributed throughout the Netherlands and 8 elsewhere in Europe (UK, France, Germany). LOFAR can simultaneously operate up to 244 independent beams and observe sources at Dec > 30. A recent observation reached 100 µJy (L-band) over a region of ~ 60 deg2 comprising ~ 10,000 sources. A number of surveys are planned including all-sky surveys at 15, 30, 60, and 120 MHz and ~ 1000 deg2 at 200 MHz to study star, galaxy and large-scale structure formation in the early universe, intercluster magnetic fields and explore new regions of parameter space for serendipitous discovery, probe the epoch of reionization and zenith and Galactic Plane transient monitoring programs at 30 and 120 MHz.

The field is largely driven towards the next generation radio facility, the Square Kilometer Array (SKA), described below. Two prototype facilities are currently being developed:

One of the SKA precursors is the Australian Square Kilometer Array Pathfinder (ASKAP; http://www.atnf.csiro.au/projects/askap), comprising of 36, 12 m antennae covering a frequency range of 700 - 1800 MHz. It has a FOV of 30° and a maximum baseline of 6 km. In 1 hr it will be able to reach 30 µJy per beam with a resolution of 7.5 arcsec. An initial construction of 6 antennae (BETA) is due for completion in 2012. The primary science goals of ASKAP include galaxy formation in the nearby universe through extragalactic HI surveys (WALLABY, DINGO), formation and evolution of galaxies across cosmic time with high-resolution, confusion-limited continuum surveys (EMU, FLASH), characterization of the radio transient sky through detection and monitoring of transient and variable sources (CRAFT, VAST) and the evolution of magnetic fields in galaxies over cosmic time through polarization surveys (POSSUM).

MeerKAT (http://www.ska.ac.za/meerkat/index.php) is the South African SKA pathfinder due for completion in 2016. It consists of 64, 13.5m dishes operating at ~1 GHz and 8 - 14.5 GHz with a baseline between 29 m to 20 km. It complements ASKAP with a larger frequency range and greater sensitivity but a smaller field of view. It has enhanced surface brightness sensitivity with its shorter and longer baselines and will also have some degree of phased element array capability. The primary science drivers cover the same type of SKA precursor science area as ASKAP, but MeerKAT will focus on those areas where its has unique capabilities -these include extremely sensitive studies of neutral hydrogen in emission to z ~ 1.4 and highly sensitive continuum surveys to µJy levels at frequencies as low as 580 MHz. An initial test bed of seven dishes (KAT-7) have been constructed and is now being used as an engineering and science prototype.

4.2.6. Other Wavelength Regimes

Given that the variability is common to most point sources at high energies, and the fact that the detectors in this regime tend to be photon counting with a good time resolution, surveys in that regime are almost by definition synoptic in character.

An excellent example of a modern high-energy survey is the Fermi

Gamma-Ray Space Telescope (FGST;

http://www.nasa.gov/fermi),

launched in 2008, that is continuously monitoring the

-ray

sky. Its Large Area Telescope (LAT) is a

pair-production telescope that detects individual photons, with a peak

effective area of ~ 8,000 cm2 and a field of view of ~ 2.4

sr. The observations can be binned into different time resolutions. The

stable response of LAT and a combination of deep and fairly uniform

exposure produces an excellent all-sky survey in the 100 MeV to 100 GeV

range to study different types of

-ray

sky. Its Large Area Telescope (LAT) is a

pair-production telescope that detects individual photons, with a peak

effective area of ~ 8,000 cm2 and a field of view of ~ 2.4

sr. The observations can be binned into different time resolutions. The

stable response of LAT and a combination of deep and fairly uniform

exposure produces an excellent all-sky survey in the 100 MeV to 100 GeV

range to study different types of

-ray

sources. This has resulted in a series of ongoing source catalog releases

(Abdo et al. 2009,

2010,

and more to come). The current source count approaches 2,000, with ~ 60%

of them identified on other wavelengths.

-ray

sources. This has resulted in a series of ongoing source catalog releases

(Abdo et al. 2009,

2010,

and more to come). The current source count approaches 2,000, with ~ 60%

of them identified on other wavelengths.

4.3. Towards the Petascale Data Streams and Beyond

The next generation of sky surveys will be largely synoptic in nature, and will move us firmly to the Petascale regime. We are already entering it with the facilities like PanSTARRS, ASKAP, and LOFAR. These new surveys and instruments not only depend critically on the ICT, but also push it to a new performance regime.

The Large Synoptic Sky Survey (LSST; Tyson et al. 2002, Ivezic et al. 2009; http://lsst.org) is a wide-field telescope that will be located at Cerro Paranal in Chile. The primary mirror will be 8.4 m in diameter, but because of the 3.4 m secondary, the collecting area is equivalent to that of a ~ 6.7 m telescope. While its development is still in the relatively early stages as of this writing, the project has a very strong community support, reflected in its top ranking in the 2010 Astronomy and Astrophysics Decadal Survey produced by the U.S. National Academy of Sciences, and it may become operational before this decade is out. The LSST is planned to produce a 6-bandpass (0.3 - 1.1 micron) wide-field, deep astronomical survey of over 20,000 deg2 of the Southern sky, with many epochs per field. The camera will have a ~ 3.2 Gigapixel detector array covering ~ 9.6 deg2 in individual exposures, with 0.2 arcsec pixels.

LSST will take more than 800 panoramic images each night, with 2 exposures per field, covering the accessible sky twice each week. The data (images, catalogs, alerts) will be continuously generated and updated every observing night. In addition, calibration and co-added images, and the resulting catalogs, will be generated on a slower cadence, and used for data quality assessments. The final source catalog is expected to have more than 20 billion rows, comprising 30 TB of data per night, for a total of 60 PB over the envisioned duration of the survey. Its scientific goals and strategies are described in detail in the LSST Science Book (Ivezic et al. 2009). Processing and analysis of this huge data stream poses a number of challenges in the arena of real-time data processing, distribution, archiving, and analysis.

The currently ongoing renaissance in the continuum radio astronomy at cm and m scale wavelengths is leading towards the facilities that will surpass even the data rates expected for the LSST, and that will move us to the Exascale regime.

The Square Kilometer Array (SKA; http://www.skatelescope.org/) will be the world's largest radio telescope, hoped to be operational in the mid-2020's. It is envisioned to have a total collecting area of approximately 1 million m2 (thus the name). It will provide continuous frequency coverage from 70 MHz to 30 GHz employing phased arrays of dipole antennas (low frequency), tiles (mid frequency) and dishes (high frequency) arranged over a region extending out to ~ 3,000 km. Its key science projects will focus on studying pulsars as extreme tests of general relativity, mapping a billion galaxies to the highest redshifts by their 21-cm emission to understand galaxy formation and evolution and dark matter, observing the primordial distribution of gas to probe the epoch of reionization, investigating cosmic magnetism and surveying all types of transient radio phenomena, and so on.

The data processing for the SKA poses significant challenges, even if we extrapolate Moore's law to its projected operations. The data will stream from the detectors into the correlator at a rate of ~ 4.2 PB/s, and then from the correlator to the visibility processors at rates between 1 and 500 TB/s, depending on the observing mode, which will require processing capabilities of ~ 200 Pflops - 2.5 Eflops. Subsequent image formation needs ~10 Pflops to create data products (~ 0.5 - 10 PB/day), which would be available for science analysis and archiving, the total computational costs of which could easily exceed those of the pipeline. Of course, this is not just a matter of hardware provision, even if it is special purpose built, but also high computational complexity algorithms for wide field imaging techniques, deconvolution, Bayesian source finding, and other tasks. Each operation will also place different constraints on the computational infrastructure, with some being memory bound and some CPU bound that will need to be optimally balanced for maximum throughput. Finally the power required for all this processing will also need to be addressed - assuming the current trends, the SKA data processing will consume energy at a rate of ~ 1 GW. These are highly non-trivial hardware and infrastructure challenges.

The real job of science, data analysis and knowledge discovery, starts after all this processing and delivery of processed data to the archives. Effective, scalable software and methodology needed for these tasks does not exist yet, at least in the public domain.

A counterpart to the relatively shallow, wide-field surveys discussed above are various deep fields that cover much smaller, selected areas, generally based on an initial deep set of observations by one of the major space-based observatories. Their scientific goals are almost always in the arena of galaxy formation and evolution: the depth is required in order to obtain adequate measurements of very distant ones, and the areas surveyed are too small to use for much of the Galactic structure work. This is a huge and vibrant field of research, and we cannot do it justice in the limited space here; we describe very briefly a few of the more popular deep surveys. More can be found, e.g., in the reviews by Ferguson et al. (2000), Bowyer et al. (2000), Brandt & Hassinger (2005), and the volume edited by Cristiani et al. (2002).

The prototype of these is the Hubble Deep Field (HDF; Williams et al. 1996; http://www.stsci.edu/ftp/science/hdf/hdf.html). It was imaged with the HST in 1995 using the Director's Discretionary Time, with 150 consecutive HST orbits and the WFPC2 instrument, in four filters ranging from near-UV to near-IR, F300W, F450W, F606W, and F814W. The ~ 2.5 arcmin field is located at RA = 12:36:49.4, Dec = +62:12:58 (J2000); a corresponding HDF-South, centered at RA = 22:32:59.2, Dec = -60:33:02.7 (J2000) was observed in 1998. An even deeper Hubble Ultra Deep Field (HUDF), centered at RA = 03:32:39.0, Dec = -27:47:29 (J2000) and covering 11 arcmin2, was observed with 400 orbits in 2003-2004, using the ACS instrument in 4 bands: F435W, F606W, F775W, and F850LP, and with 192 orbits in 2009 with the WFC3 instrument in 3 near-IR filters, F105W, F125W, and F160W. Depths equivalent to V ~ 30 mag were reached. There has been a large number of follow-up studies of these deep fields, involving many ground-based and space-based observatories, mainly on the subjects of galaxy formation and evolution at high redshifts.

The Groth Survey Strip field, is a 127 arcmin2 region that has been observed with the HST in both broad V and I bands, and a variety of ground-based and space-based observatories. It was expanded to the Extended Groth Strip in 2004-2005, covering 700 arcmin2 using 500 exposures with the ACS instrument on the HST. The All-Wavelength Extended Groth Strip International Survey (AEGIS) continues to study this at multiple wavelengths.

The Chandra Deep Field South (CDF-S; http://www.eso.org/~vmainier/cdfs_pub/, Giacconi et al. 2001) was obtained by the Chandra X-ray telescope looking at the same patch of the sky for 11 consecutive days in 1999-2000, for a total of 1 million seconds. The field covers 0.11 deg2 centered at RA = 03:32:28.0, Dec = -27:48:30 (J2000). These X-ray observations were followed up extensively by many other observatories.

The Great Observatories Origins Deep Survey (GOODS; http://www.stsci.edu/science/goods/; Dickinson et al. 2003, Giavalisco et al. 2004) builds on the HDF-N and CDF-S by targeting fields centered on those areas, and covers approximately 0.09 deg2 using a number of NASA and ESA space observatories: Spitzer, Hubble, Chandra, Herschel, XMM-Newton, etc., as well as a number of deep ground-based studies and redshift surveys. The main goal is to study the distant universe to faintest fluxes across the electromagnetic spectrum, with a focus on the formation and evolution of galaxies.

The Subaru Deep Field

(http://www.naoj.org/;

Kashikawa et

al. 2004)

was observed initially over 30 nights at the 8.2 m Subaru telescope on

Mauna Kea, using the SupremeCam instrument. The field, centered

at RA = 13:24:38.9, Dec = +27:29:26 (J2000), cover a patch of 34 by 27

arcmin2. It was imaged in the BVRi'z' bands, and

narrow bands centered at 8150 Å and 9196 Å. One of the main

aims was to catalog Lyman-break galaxies out to large redshifts, and get

samples of Ly emitters

(LAE) as probes of the very early galaxy

evolution. Over 200,000 galaxies were detected, yielding samples of

hundreds of high-redshift galaxies. These were followed by extensive

spectroscopy and imaging on other wavelengths.

emitters

(LAE) as probes of the very early galaxy

evolution. Over 200,000 galaxies were detected, yielding samples of

hundreds of high-redshift galaxies. These were followed by extensive

spectroscopy and imaging on other wavelengths.

The Cosmological Evolution Survey (COSMOS; http://cosmos.astro.caltech.edu; Scoville et al. 2007) was initiated as an HST Treasury Project, but it expanded to include deep observation from a range of facilities, both ground-based and space-based. The 2 deg2 field is centered at RA = 10:00:28.6, Dec = +02:12:21.0 (J2000). The HST observations were carried out in 2004-2005. These were followed by a large number of ground-based and space-based observatories. The main goals were to detect 2 million objects down to the limiting magnitude IAB > 27 mag, including > 35,000 Lyman Break Galaxies and extremely red galaxies out to z ~ 5. The follow-up included an extensive spectroscopic and imaging on other wavelengths.

The Cosmic Assembly Near-IR Deep Extragalactic Legacy Survey (CANDELS; 2010-2013; http://candels.ucolick.org/) uses deep HST imaging of over 250,000 galaxies with WFC3/IR and ACS instruments to study galactic evolution at 1.5 < z < 8. The surveys is at 2 depths: moderate (2 orbits) over 0.2 deg2, and deep (12 orbits) over 0.04 deg2. An additional goal is to refine the constraints on time variation of cosmic-equation of state parameter leading to a better understanding of the dark energy.

A few ground-based deep surveys (in addition to the follow-up of those listed above) are also worthy of note. They include:

The Deep Lens Survey (DLS; 2001-2006; Wittman et al. 2002; http://dls.physics.ucdavis.edu) covered ~ 20 deg2 spread over 5 fields, using CCD mosaics at KPNO and CTIO 4 m telescopes, with 0.257 arcsec/pixel, in BVRz' bands. The survey also had a significant synoptic component (Becker et al. 2004).

The NOAO Deep Wide Field Survey (NDWFS; http://www.noao.edu/noao/noaodeep; Jannuzi & Dey 1999) covered ~ 18 deg2 spread over 2 fields, also using CCD mosaics at KPNO and CTIO 4 m telescopes, in BwRI bands, reaching point source limiting magnitudes of 26.6, 25.8, and 25.5 mag, respectively. These fields have been followed up extensively at other wavelengths.

CFHT Legacy Survey (CFHTLS; 2003-2009; < ahref="http://www.cfht.hawaii.edu/Science/CFHLS" target="ads_dw">http://www.cfht.hawaii.edu/Science/CFHLS) is a community-oriented service project that covered 4 deg2 spread over 4 fields, using the MegaCam imager at the CFHT 3.6 m telescope, over an equivalent of 450 nights, in u*g'r'i'z' bands. The survey served a number of different projects.

Deep fields have had a transformative effect on the studies of galaxy formation and evolution. They also illustrated a power of multi-wavelength studies, as observations in each regime leveraged each other. Finally, they also illustrated a power of the combination of deep space-based imaging, followed by deep spectroscopy with large ground-based telescopes.

Many additional deep or semi-deep fields have been covered from the ground in a similar manner. We cannot do justice to them or to the entire subject in the limited space available here, and we direct the reader to a very extensive literature that resulted from these studies.

While imaging tends to be the first step in many astronomical ventures, physical understanding often comes from spectroscopy. Thus, spectroscopic surveys naturally follow the imaging ones. However, they tend to be much more focused and limited scientifically: there are seldom surprising new uses for the spectra, beyond the original scientific motivation.

Two massive, wide-field redshift surveys that have dramatically changed the field used highly multiplexed (multi-fiber or multi-slit) spectrographs; they are the 2dF and SDSS. A few other significant previous redshift surveys were mentioned in Sec. 2.

Several surveys were done using the 2-degree field (2dF) multi-fiber

spectrograph at the Anglo-Australian Telescope (AAT) at Siding Spring,

Australia. The 2dF Galaxy Redshift Survey (2dFGRS; 1997-2002;

Colless 1999;

Colless et al. 2001)

observed ~ 250,000 galaxies down to mB

19.5 mag. The 2dF

Quasar Redshift Survey (2QZ; 1997-2002;

Croom et al. 2004)

observed ~ 25,000 quasars down to mB

19.5 mag. The 2dF

Quasar Redshift Survey (2QZ; 1997-2002;

Croom et al. 2004)

observed ~ 25,000 quasars down to mB

21 mag. The

spectroscopic component of the SDSS eventually produced redshifts and

other spectroscopic measurements for ~ 930,000 galaxies and ~ 120,000

quasars, and nearly 500,000 stars and other objects. These massive

spectroscopic surveys greatly expanded our knowledge of the LSS, the

evolution of quasars, and led to numerous other studies.

21 mag. The

spectroscopic component of the SDSS eventually produced redshifts and

other spectroscopic measurements for ~ 930,000 galaxies and ~ 120,000

quasars, and nearly 500,000 stars and other objects. These massive

spectroscopic surveys greatly expanded our knowledge of the LSS, the

evolution of quasars, and led to numerous other studies.

Deep imaging surveys tend to be followed by the deep spectroscopic ones, since redshifts are essential for their interpretation and scientific uses. Some of the notable examples include:

The Deep Extragalactic Evolution Probe (DEEP; Vogt et al. 2005, Davis et al. 2003) survey is a two-phase project using the 10 m Keck telescopes to obtain spectra of faint galaxies over ~ 3.5 deg2, to study the evolution of their properties and evolution of clustering out to z ~ 1.5. Most of the data were obtained using the DEIMOS multi-slit spectrograph, with targets preselected using photometric redshifts. Spectra were obtained for ~ 50,000 galaxies down to ~ 24 mag, in the redshift range z ~ 0.7 - 1.55 with candidates, with ~ 80% yielding redshifts.

The VIRMOS-VLT Deep Survey (VVDS; Le Fevre et al. 2005) is an ongoing comprehensive imaging and redshift survey of the deep universe, complementary to the Keck DEEP survey. An area of ~ 16 deg2 in four separate fields is covered using the VIRMOS multi-object spectrograph on the 8.2 m VLT telescopes. With 10 arcsec slits, spectra can be measured for 600 objects simultaneously. Targets were selected from a UBRI photometric survey (limiting magnitude IAB = 25.3 mag) carried out with the CFHT. A total of over 150,000 redshifts will be obtained (~ 100,000 to IAB = 22.5 mag, ~ 50,000 to IAB = 24 mag, and ~ 1,000 to IAB = 26 mag), providing insight into galaxy and structure formation over a very broad redshift range, 0 < z < 5.

zCOSMOS (Lilly et al. 2007) is a similar survey on the VLT using VIRMOS, but only covering the 1.7 deg2 COSMOS ACS field down to a magnitude limit of IAB < 22.5 mag. Approximately 20,000 galaxies were measured over the redshift range 0.1 < z < 1.2, comparable in survey parameters to the 2dFGRS, with a further 10,000 within the central 1 deg2, selected with photometric redshifts in the range 1.4 < z < 3.0.

The currently ongoing Baryon Oscillation Spectroscopic Survey (BOSS), due to be completed in early 2014 as part of SDSS-III, will cover an area of ~ 10,000 deg2 and map the spatial distribution of ~ 1.5 million luminous red galaxies to z = 0.7, and absorption lines in the spectra of ~ 160,000 quasars to z ~ 3.5. Using the characteristic scale imprinted by the baryon acoustic oscillation in the early universe in these distributions as a standard ruler, it will be able to determine the angular diameter distance and the cosmic expansion rate with a precision of ~ 1 to 2%, in order to constrain theoretical models of dark energy.

Its successor, the BigBOSS survey

(http://bigboss.lbl.gov)

will utilize a purpose-built 5,000 fiber mutiobject spectrograph, to be

mounted at the prime focus of the KPNO 4 m Mayall telescope, covering a

3° FOV. The project will conduct a series of redshift surveys over

~ 500 nights spread over 5 years, with a primary goal of constraining

models of dark energy, using different observational tracers: clustering

of galaxies out to z ~ 1.7, and

Ly forest lines in the

spectra of quasars at 2.2 < z < 3.5. The survey plans to

obtain spectra of ~ 20 million galaxies and ~ 600,000 quasars over a

14,000 deg2 area, in order to reach these goals.

forest lines in the

spectra of quasars at 2.2 < z < 3.5. The survey plans to

obtain spectra of ~ 20 million galaxies and ~ 600,000 quasars over a

14,000 deg2 area, in order to reach these goals.

Another interesting, incipient surveys is the Large Sky Area Multi-Object Fiber Spectroscopic Telescope (LAMOST; http://www.lamost.org/website/en), one of the National Major Scientific Projects undertaken by the Chinese Academy of Science. A custom-built 4 m telescopes has a 5° FOV, accommodating 4,000 optical fibers for a highly multiplexed spectroscopy.

A different approach uses panoramic, slitless spectroscopy surveys, where a dispersing element (an objective prism, grating, or a grism - grating ruled on a prism) is placed in the front of the telescope optics, thus providing wavelength-dispersed images for all objects. This approach tends to work only for the bright sources in ground-based observations, since any given pixel gets the signal form the object only at the corresponding wavelength, but the sky background that dominates the noise has contributions from all wavelengths. For space-based observations, this is much less of a problem. Crowding can also be a problem, due to the overlap of adjacent spectra. Traditionally, slitless spectroscopy surveys have been used to search for emission-line objects (e.g., star-forming or active galaxies and AGN), or objects with a particular continuum signature (e.g., low metallicity stars).

One good example is the set of surveys done at the Hamburg Observatory,

covering both the Northern sky from the Calar Alto observatory in Spain,

and the Southern sky from ESO

(http://www.hs.uni-hamburg.de/EN/For/Exg/Sur),

that used a wide-angle objective prism survey to look for AGN

candidates, hot stars and optical counterparts to ROSAT X-ray

sources. For each 5.5 × 5.5 deg2 field in the survey

area, two objective prism plates were taken, as well as an unfiltered

direct plate to determine accurate positions and recognize overlaps. All

plates were subsequently digitized and, under good seeing conditions, a

spectral resolution of 45A at

H was be

achieved. Candidates

selected from these slitless spectra were followed by targeted

spectroscopy, producing ~ 500 AGN and over 2,000 other emission line

sources, and a large number of extremely metal-poor stars.

was be

achieved. Candidates

selected from these slitless spectra were followed by targeted

spectroscopy, producing ~ 500 AGN and over 2,000 other emission line

sources, and a large number of extremely metal-poor stars.

Since sky surveys cover so many dimensions of the OPS, but only a subset of them, comparisons of surveys can be difficult if not downright meaningless. What really matters is a scientific discovery potential, which very much depends on what are the primary science goals; for example, a survey optimized to find supernovae may not be very efficient for studies of galaxy clusters, and vice versa. However, for just about any scientific application, area coverage and depth, and, in the case of synoptic sky surveys also the number of epochs per field, are the most basic characteristics, and can be compared fairly, at least in any given wavelength regime. Here we attempt to define some general indicators of the scientific discovery potential for sky surveys, based on these general parameters.

Often, but misleadingly, surveys are compared using the etendue,

the product of the telescope area, A, and the solid angle

subtended by the individual exposures,

. However,

A

. However,

A simply reflects the properties of a telescope and the instrument

optics. It implies nothing whatsoever about a survey that may be done

using that telescope and instrument combination, as it does not say

anything about the depth of the individual exposures, the total area

coverage, and the number of exposures per field. The

A

simply reflects the properties of a telescope and the instrument

optics. It implies nothing whatsoever about a survey that may be done

using that telescope and instrument combination, as it does not say

anything about the depth of the individual exposures, the total area

coverage, and the number of exposures per field. The

A is

the same for a single, short exposure, and for a survey that has

thousands of fields, several bandpasses, deep exposures, and hundreds of

epochs for each field. Clearly, a more appropriate figure of merit

(FoM), or a set thereof, is needed.

is

the same for a single, short exposure, and for a survey that has

thousands of fields, several bandpasses, deep exposures, and hundreds of

epochs for each field. Clearly, a more appropriate figure of merit

(FoM), or a set thereof, is needed.

We propose that a fair measure of a general scientific potential of a survey would be a product of a measure of its average depth, and its coverage of the relevant dimensions of the OPS. Or: how deep, how wide, and how often, and for how long.

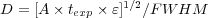

As a quantitative measure of depth in an average single observation, we can define a quantity that is roughly proportional to the S/N for background-limited observations for an unresolved source, namely:

|

where A is the effective collecting area of the telescope in

m2, texp is the typical exposure length in

sec,  is the overall throughput efficiency of the

telescope+instrument, and FWHM is the typical PSF or beam size

full-width at half-maximum (i.e., the seeing, for the ground-based

visible and NIR observations) in arcsec.

is the overall throughput efficiency of the

telescope+instrument, and FWHM is the typical PSF or beam size

full-width at half-maximum (i.e., the seeing, for the ground-based

visible and NIR observations) in arcsec.

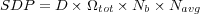

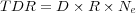

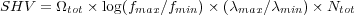

The coverage of the OPS depends on the number of bandpasses (or, in the

case of radio or high energy surveys, octaves; or, in the case of

spectroscopic surveys, the number of independent resolution elements in

a typical survey spectrum), Nb, and the total survey

area covered,

tot,

expressed as a fraction of the

entire sky. In the case of synoptic sky surveys, additional relevant

parameters include the area coverage rate regardless of the bandpass (in